Submitted to YouTube by "Wakaruru"

Wednesday, July 2, 2008

Hot Zone prototype (Wakaruru)

Submitted to YouTube by "Wakaruru"

Sunday, June 22, 2008

Lund Eye Tracking Acadamy

"This text is about how to record good quality eye-tracking data from commercially available video-oculographic eye-tracking system, and how to derive and use the measures that an eye-tracker can give. The ambition is to cover as many as possible of the measures used in science and applied research. The need for a guide on how to use eye-tracking data has grown during the past years, as an effect of increasing interest in eye-tracking research. Due to the versatility of new measurement techniques and the important role of human vision in all walks of life, eye-tracking is now used in reading research, neurology, advertisement studies, psycholinguistics, human factors, usability studies, scene perception, and many other fields "

Download the document (PDF, 93 pages, 19Mb)

Tuesday, June 17, 2008

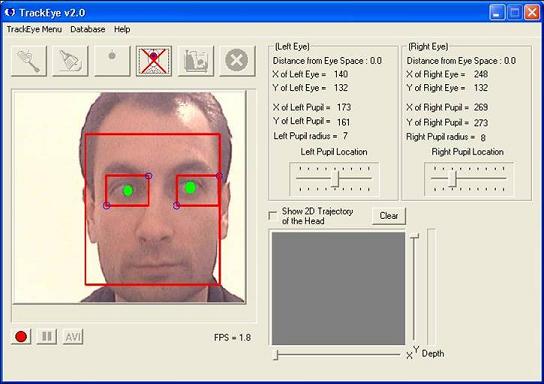

Open Source Eye Tracking

Download the executable as well as the source code. See the extensive documentation.

You will also need to download the OpenCV library.

"The purpose of the project is to implement a real-time eye-feature tracker with the following capabilities:

- RealTime face tracking with scale and rotation invariance

- Tracking the eye areas individually

- Tracking eye features

- Eye gaze direction finding

- Remote controlling using eye movements

The implemented project is on three components:

- Face detection: Performs scale invariant face detection

- Eye detection: Both eyes are detected as a result of this step

- Eye feature extraction: Features of eyes are extracted at the end of this step

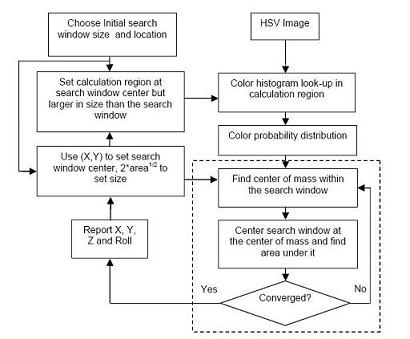

Two different methods for face detection were implemented in the project:

- Continuously Adaptive Means-Shift Algorithm

- Haar Face Detection method

Two different methods of eye tracking were implemented in the project:

- Template-Matching

- Adaptive

EigenEyeMethod

Wednesday, June 11, 2008

Response to demonstration video

I wish give thanks to everyone for their feedback. At times during the long days and nights in the lab I've been wondering if this would be just another paper in the pile. The response has proven the interest and gives motivation for continuous work. Right now I feel that this is just the beginning and determined to take it towards the next level.

Please do not hesitate to contact me with ideas and suggestions.

Thank you.

Monday, June 9, 2008

Video demonstration online

Thesis release

Thursday, June 5, 2008

MedioVis at University of Konstanz

Tuesday, June 3, 2008

Eye typing at the Bauhaus University of Weimar

"Qwerty is based on dwell time selection. Here the user has to stare for 500 ms a determinate character to select it. QWERTY served us, as comparison base line for the new eye typing systems. It was implemented in C++ using QT libraries."

IWrite

"A simple way to perform a selection based on saccadic movement is to select an item by looking at it and confirm its selection by gazing towards a defined place or item. Iwrite is based on screen buttons. We implemented an outer frame as screen button. That is to say, characters are selected by gazing towards the outer frame of the application. This lets the text window in the middle of the screen for comfortable and safe text review. The order of the characters, parallel to the display borders, should reduce errors like the unintentional selection of items situated in the path as one moves across to the screen button.The strength of this interface lies on its simplicity of use. Additionally, it takes full advantage of the velocity of short saccade selection. Number and symbol entry mode was implemented for this editor in the lower frame. Iwrite was implemented in C++ using QT libraries."

"Pie menus have already been shown to be powerful menus for mouse or stylus control. They are two-dimensional, circular menus, containing menu items displayed as pie-formed slices. Finding a trade-off between user interfaces for novice and expert users is one of the main challenges in the design of an interface, especially in gaze control, as it is less conventional and utilized than input controlled by hand. One of the main advantages of pie menus is that interaction is very easy to learn. A pie menu presents items always in the same position, so users can match predetermined gestures with their corresponding actions. We therefore decided to transfer pie menus to gaze control and try it out for an eye typing approach. We designed the Pie menu for six items and two depth layers. With this configuration we can present (6 x 6) 36 items. The first layer contains groups of five letters ordered in pie slices.."

StarWrite

In StarWrite, selection is also based on saccadic movements to avoid dwell times. The idea of StarWrite is to combine eye typing movements with feedback. Users, mostly novices, tend to look to the text field after each selection to check what has been written. Here letters are typed by dragging them into the text field. This provides instantaneous visual feedback and should spare checking saccades towards text field. When a character is fixated, both it and its neighbors are highlighted and enlarged in order to facilitate the character selection. In order to use x- and y-coordinates for target selection, letters were arranged alphabetically on a half-circle in the upper part of the monitor. The text window appeared in the lower field. StarWrite provides a lower case as well, upper case, and numerical entry modes, that can be switched by fixating for 500 milliseconds the corresponding buttons, situated on the lower part of the application. There are also placed the space, delete and enter keys, which are driven by a 500 ms dwell time too. StarWrite was implemented in C++ using OpenGL libraries for the visualization."

Associated publications

- Huckauf, A. and Urbina, M. H. 2008. Gazing with pEYEs: towards a universal input for various applications. In Proceedings of the 2008 Symposium on Eye Tracking Research & Applications (Savannah, Georgia, March 26 - 28, 2008). ETRA '08. ACM, New York, NY, 51-54. [URL] [PDF] [BIB]

- Urbina, M. H. and Huckauf, A. 2007. Dwell time free eye typing approaches. In Proceedings of the 3rd Conference on Communication by Gaze Interaction - COGAIN 2007, September 2007, Leicester, UK, 65--70. Available online at http://www.cogain.org/cogain2007/COGAIN2007Proceedings.pdf [PDF] [BIB]

- Huckauf, A. and Urbina, M. 2007. Gazing with pEYE: new concepts in eye typing. In Proceedings of the 4th Symposium on Applied Perception in Graphics and Visualization (Tubingen, Germany, July 25 - 27, 2007). APGV '07, vol. 253. ACM, New York, NY, 141-141. [URL] [PDF] [BIB]

- Urbina, M. H. and Huckauf, A. 2007. pEYEdit: Gaze-based text entry via pie menus. In Conference Abstracts. 14th European Conference on Eye Movements ECEM2007. Kliegl, R. & Brenstein, R. (Eds.) (2007), 165-165.

Sunday, June 1, 2008

Project finalization and thesis defence

I´ve put together a video demonstration that together with the thesis will be released this week. It demonstrates the U.I components and the prototype interface and will be posted to the major video sharing networks (YouTube etc.)

Thursday, May 22, 2008

GaCIT 2008 - Summer School on Gaze, Communication, and Interaction Technology

"Vision and visual communication are central in HCI. Researchers need to understand visual information processing and be equipped with appropriate research tools and methods for understanding users' visual processes.

The GaCIT summer school offers an intensive one-week camp where doctoral students and researchers can learn and refresh vision related skills and knowledge under the tutelage of leading experts in the area. There will be an emphasis on the use of eye tracking technology as a research tool. The program will include theoretical lectures and hands-on exercises, an opportunity for participants to present their own work, and a social program enabling participants to exchange their experiences in a relaxing and inspiring atmosphere."

The following themes and speakers will be included:

Active Vision and Visual Cognition (Boris Velichkovsky)

Topics will include issues in visual perception with relevant aspects of attention, memory, and communication.Hands-on Eye Tracking: Working with Images and Video (Andrew Duchowski)

Topics will include: Eye tracking methodology and experimental design review; setting up an image study: feedforward visual inspection training; visualization and analysis; exercise: between-subjects static fixation analysis; and analysis of eye tracked video.Designing Eye-Gaze Interaction: Supporting Tasks and Interaction Techniques (Howell Istance)

This part of the summer school will examine how user needs and tasks can be mapped on particular ways of designing gaze-based interaction. This will cover traditional desktop applications as well as 3D virtual communities and on-line games.Eye tracking in Web Search Studies (Edward Cutrell)

Topics will include: Eye tracking research on web search and more general web-based analysis with a brief hands-on session to try out the techniques.Participant Presentations

Time has been reserved for those participants that are doing or planning to do work related to the theme of the summer school to present and discuss their work or plans with the other participants.